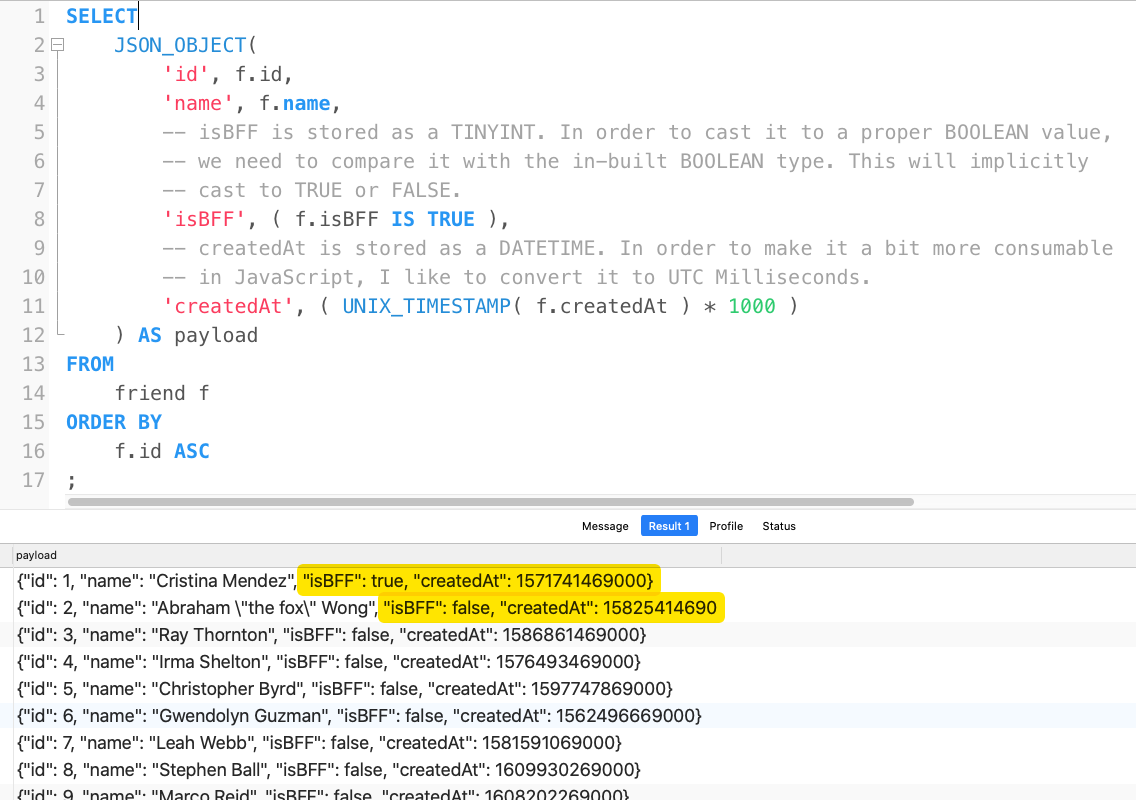

Panoply AI-driven data pipeline speeds and manages the entire process on the only enterprise-level cloud service that combines an automated ETL with a data warehouse. The Panoply JSON integration makes it easy to extract JSON data, combine it with all your other data sources, and store it in one secure location. In any data operations, including those that involve JSON files, an ETL method is needed to upload, process, and store JSON and other related data. This reverse ETL integration makes sure that people in your workspace reflect. Because JSON is so easy for both machines and humans to work with, large amounts of source data are often stored in JSON files. Import people, objects, and relationships from an Amazon Redshift database. Redshift has exceptional support for Machine Learning, and developers can create, train and deploy Amazon Sagemaker models using SQL. Its language conventions are like those of other programming languages, which makes JSON convenient for programmers. Redshift can seamlessly query the files like CSV, Avro, Parquet, JSON, ORC directly with the help of ANSI SQL. JSON text format is often used to transfer data files between web apps and servers. Here we learned to query JSON data from the table in Snowflake.JavaScript Object Notation (JSON) is a lightweight data-interchange file format based on the JavaScript programming language. The output of the query: As you can see, in the below image, we are select the individual attributes from the JSON object and create columns. Here we are going to query the JSON object using the select statement as shown below.ĭEZYRE_CUSTOMER_DATA:address.city::string as City,ĭEZYRE_CUSTOMER_DATA:address.state::string as state,ĭEZYRE_CUSTOMER_DATA:address.streetAddress::string as streetNo Here we will verify the data loaded into the target table by running a select query as shown below. Each schema in a database contains tables and other kinds of named objects. Here we will load the JSON data to the target table, which we loaded earlier into the internal stage, as shown below. The reverse-engineering function, if it detects JSON documents in a column. Let’s discuss some query for manipulating our JSON object. Put file://D:\customer.json output of the statement: We have created the JSON object as a key:value pair where keys were first name, last name and gender. Redshift has long provided support for querying and manipulating JSON formatted data, and previously you might have used a varchar type to store this, or accessed and unnested formatted files via Spectrum and external tables so this is functionality is a welcome addition. Here we will load the JSON data file from your local system to the staging of the Snowflake as shown below. ) ] Ĭreate or replace temporary table dezyre_customer_table (dezyre_customer_data variant ) It creates a new table in the current/specified schema or replaces an existing table.ĬREATE TABLE. Here we are going to create a temporary table using the Create statement as shown below.

Step 5: Create Table in Snowflake using Create Statement TYPE = ]Ĭreate temporary stage custome_temp_int_stage To select the database which you created earlier, we will use the "use" statementĬreates a named file format that describes a set of staged data to access or load into Snowflake tables.ĬREATE FILE FORMAT Follow the steps provided in the link above.

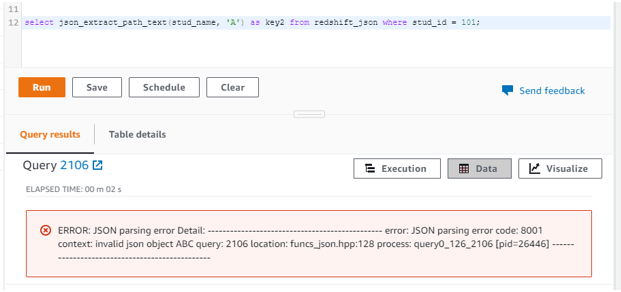

Go to and then log in by providing your credentials. Using SUPER data type make it much more easier to work with JSON data: First, convert your JSON column into SUPER data type using JSONPARSE() function. It provides advanced features like dynamic typing and objects unpivoting (see AWS doc). We need to log in to the snowflake account. Since April 2021, Amazon Redshift provides native support for JSON using SUPER data type.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed